Xinrui Cui

About Me

I am an Assistant Professor in the Department of Computer Science and Engineering at the University of North Texas. I spent one year as a postdoctoral scholar at University of British Columbia, working with Rabab Ward. I obtained my PhD at University of British Columbia, advised by Z. Jane Wang.

My research operates at the convergence of Computer Graphics, Computer Vision, and Artificial Intelligence. The ultimate goal of my work is to enhance human life through advanced AI systems that strike a balance between environmental understanding and adaptive interaction. I am particularly driven by the development of a new generation of 3D reconstruction and rendering algorithms for human characters and complex scenes. By bridging classical computer graphics pipelines with deep learning techniques, I aim to create systems that are high-performing, interpretable, and physically grounded.

Research Interests

- Neural Representations & Rendering

- Physics-Grounded Dynamics

- 3D & Video World Simulators

- Generative AI & Large-scale Scene Generation

- Explainable AI (XAI)

Research Vision & Goals

My long-term vision is to develop AI technologies capable of perceiving, understanding, and interacting with 3D objects, people, and environments. To achieve this, my research group focuses on four primary objectives:

- High-Fidelity 3D Reconstruction: Developing robust methods for high-quality 3D reconstruction of real-world scenes from sparse or limited inputs.

- Controllable & Expressive Generative Model: Developing frameworks that pair high-fidelity, creative synthesis with precise user constraints, enabling editing and manipulation of specific attributes like geometry, style, or semantic concepts.

- Physics-Informed World Simulator: Advancing the frontier of physics-grounded world models to recreate the physical world and simulate its response to physical actions.

- Explainable & Trustworthy AI: Transforming opaque neural networks into transparent, human-understandable systems with explicit semantic control, ensuring that AI-driven decisions remain interpretable and reliable.

Join the Lab

I am actively looking for motivated PhD and MS students to join my research group. If you are interested in Computer Vision, Graphics, or the future of 3D AI, I encourage you to reach out. Our lab provides a collaborative environment focused on maintaining transparency, controllability, and user trust in AI systems.

Prospective Students: Please feel free to get in touch via email with your CV and a brief description of your research interests and how they align with our current goals.

Current Members

PhD Students:

- Haiyan Sun (2024Fall - Present)

- Guiling Deng (2025Fall - Present)

Master Students:

- Yuxuan Liu (2025Fall - Present)

- Panam Pareshkumar Dodia (Graduated in 2025)

Undergraduate Students:

- Lance Joseph Trasporto (2026Spring - Present)

Teaching

UNT CSCE 5210 — Fundamentals of Artificial Intelligence

- Spring 2026

UNT CSCE 4201 — Introduction to Artificial Intelligence

- Spring 2026

UNT CSCE 4205 — Introduction to Machine Learning

- Fall 2025, Fall 2024

UNT CSCE 5215 — Machine Learning

- Fall 2025, Spring 2025, Fall 2024, Spring 2024

UNT CSCE 6940 — Individual Research

- Spring 2026, Fall 2025, Spring 2025

UNT CSCE 5950 — Master’s Thesis

- Spring 2026, Fall 2025, Spring 2025

Selected Publications

* Corresponding author

† Co-first author

-

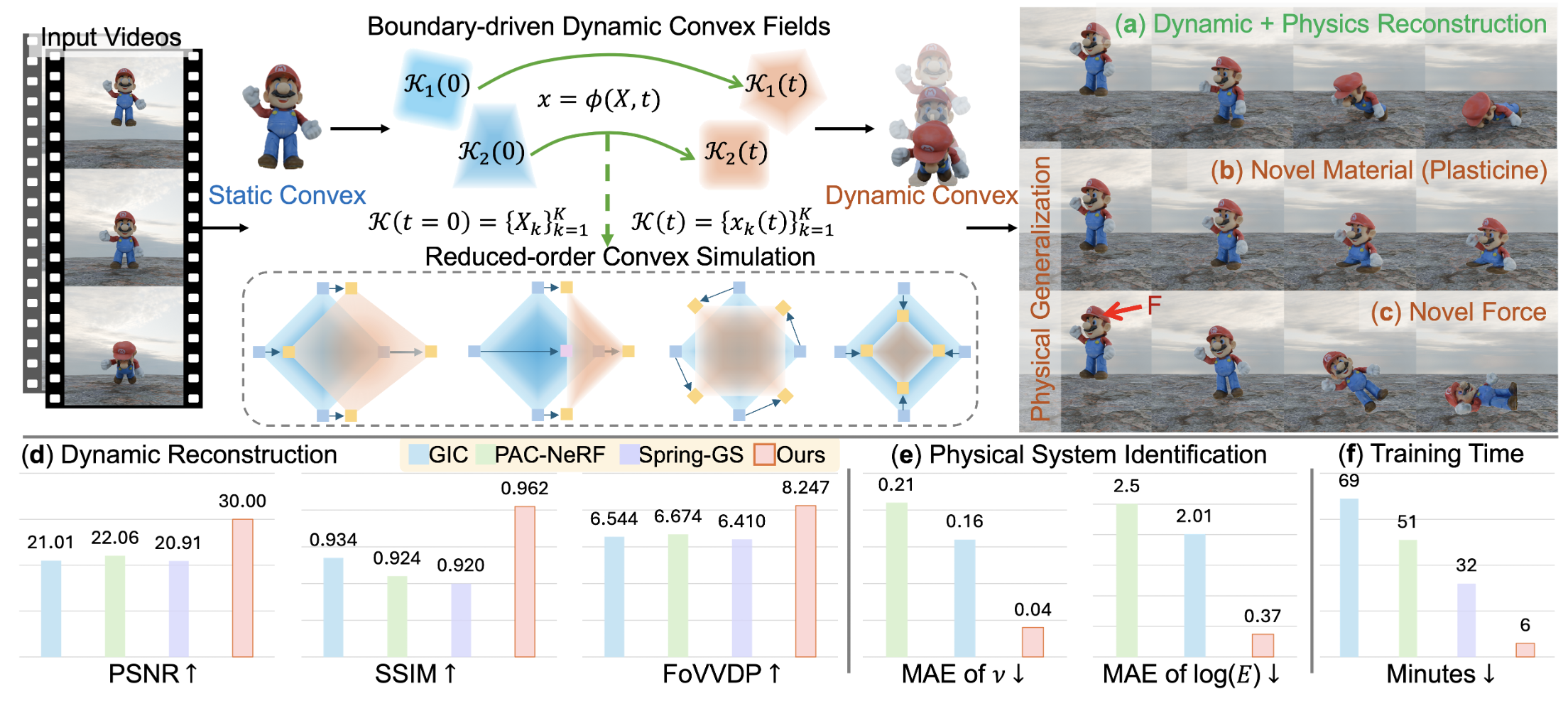

arXiv, 2026A physics-informed framework that unifies visual rendering and physical simulation by representing deformable radiance fields as boundary-driven 3D dynamic convex primitives governed by reduced-order continuum mechanics, enabling the high-fidelity reconstruction of appearance, geometry, and physical properties.

arXiv, 2026A physics-informed framework that unifies visual rendering and physical simulation by representing deformable radiance fields as boundary-driven 3D dynamic convex primitives governed by reduced-order continuum mechanics, enabling the high-fidelity reconstruction of appearance, geometry, and physical properties. -

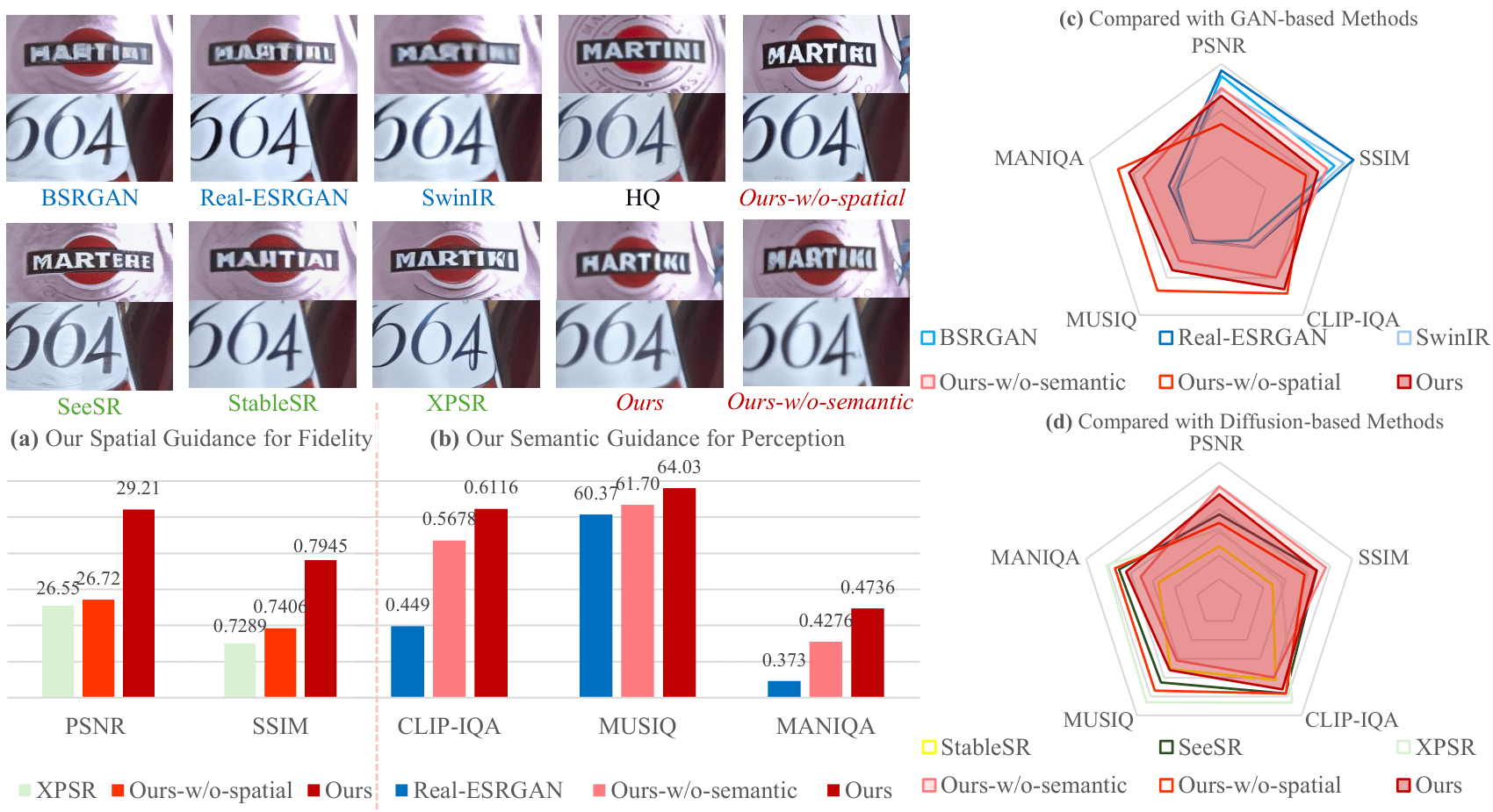

arXiv, 2026A spatial-semantic guided diffusion framework that integrates spatial-grounded textual guidance and semantic-enhanced visual guidance to achieve a superior balance in the perception-distortion trade-off, producing super-resolution results that are both perceptually realistic and structurally faithful.

arXiv, 2026A spatial-semantic guided diffusion framework that integrates spatial-grounded textual guidance and semantic-enhanced visual guidance to achieve a superior balance in the perception-distortion trade-off, producing super-resolution results that are both perceptually realistic and structurally faithful. -

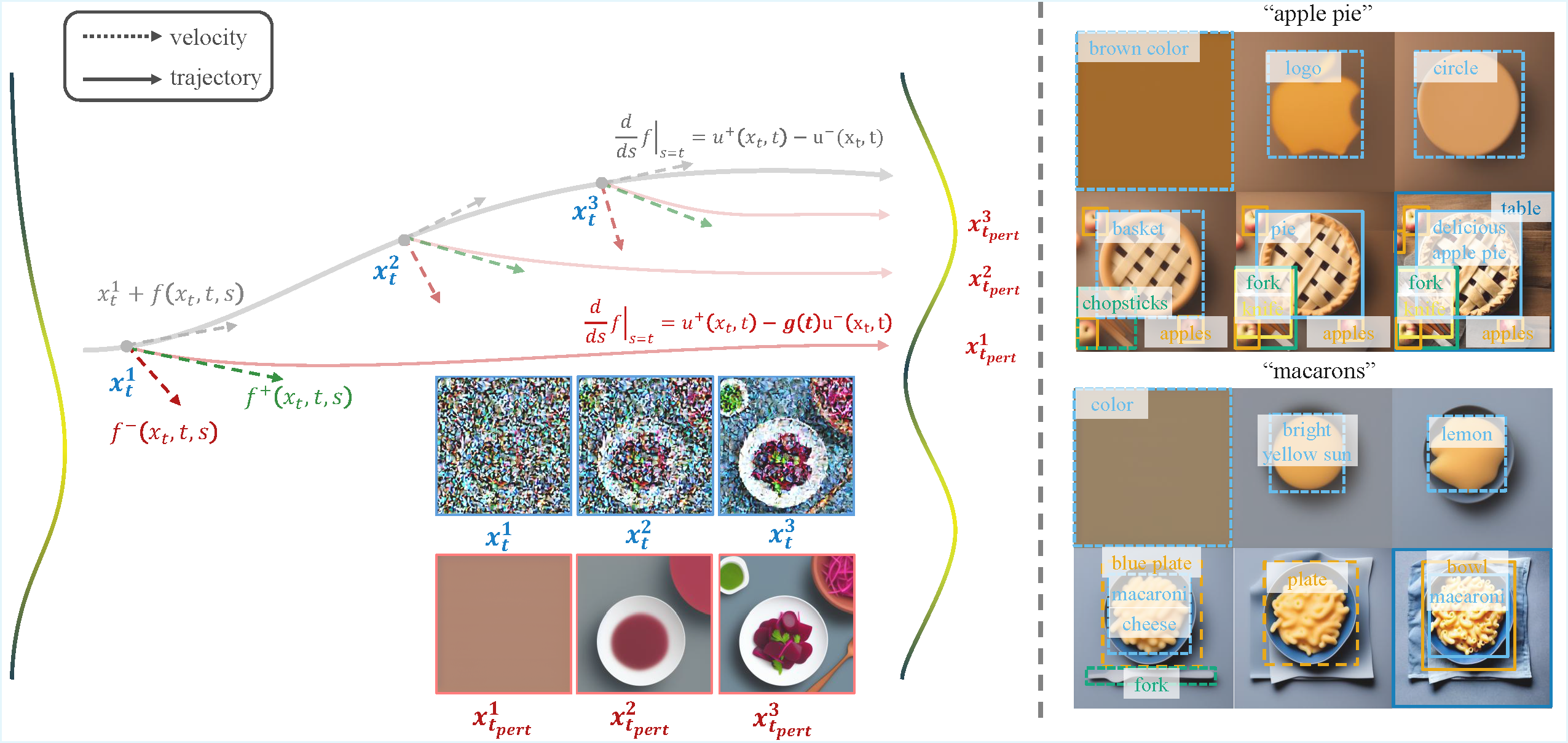

-

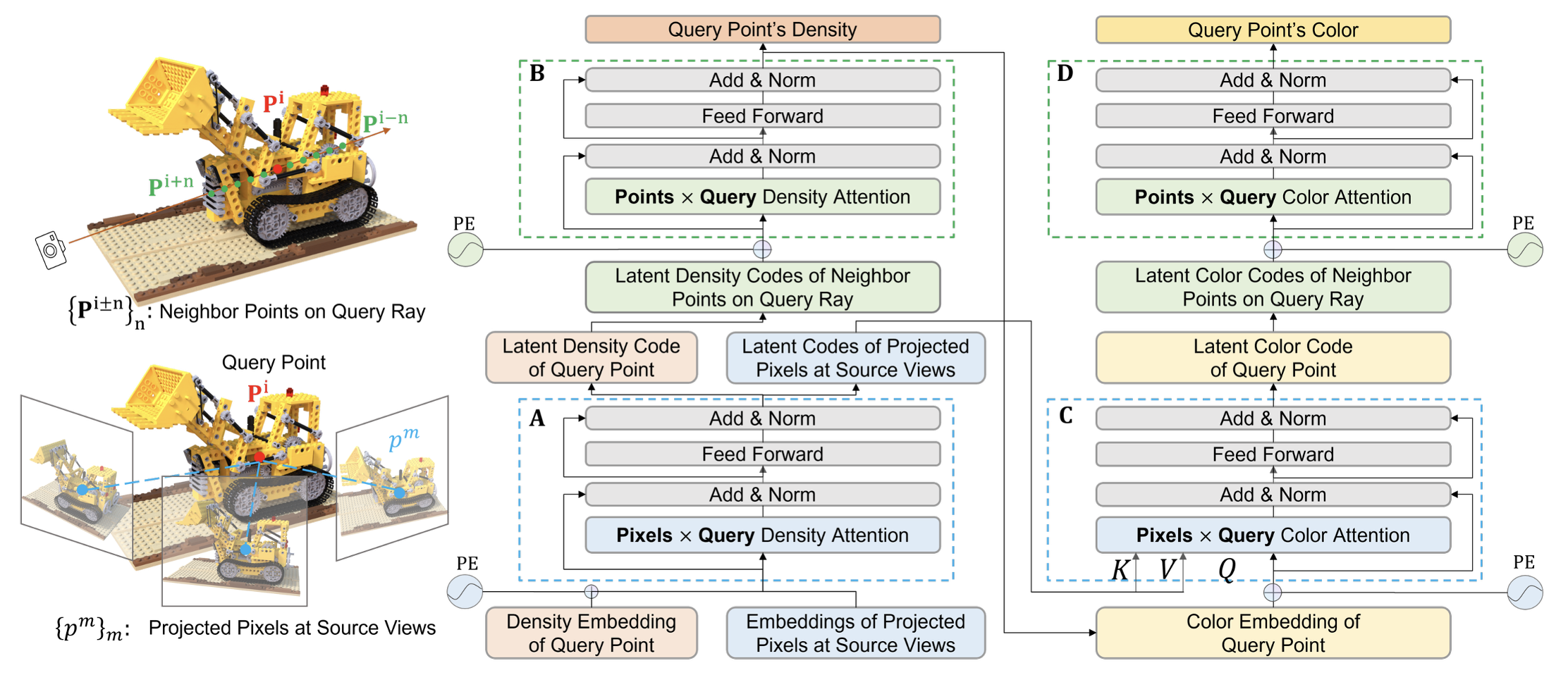

In ACM International Conference on Multimedia, Melbourne VIC, Australia, 2024A unified, end-to-end Transformer-based framework for generalizable 3D scene representation and rendering that improves model interpretability and performance.

In ACM International Conference on Multimedia, Melbourne VIC, Australia, 2024A unified, end-to-end Transformer-based framework for generalizable 3D scene representation and rendering that improves model interpretability and performance. -

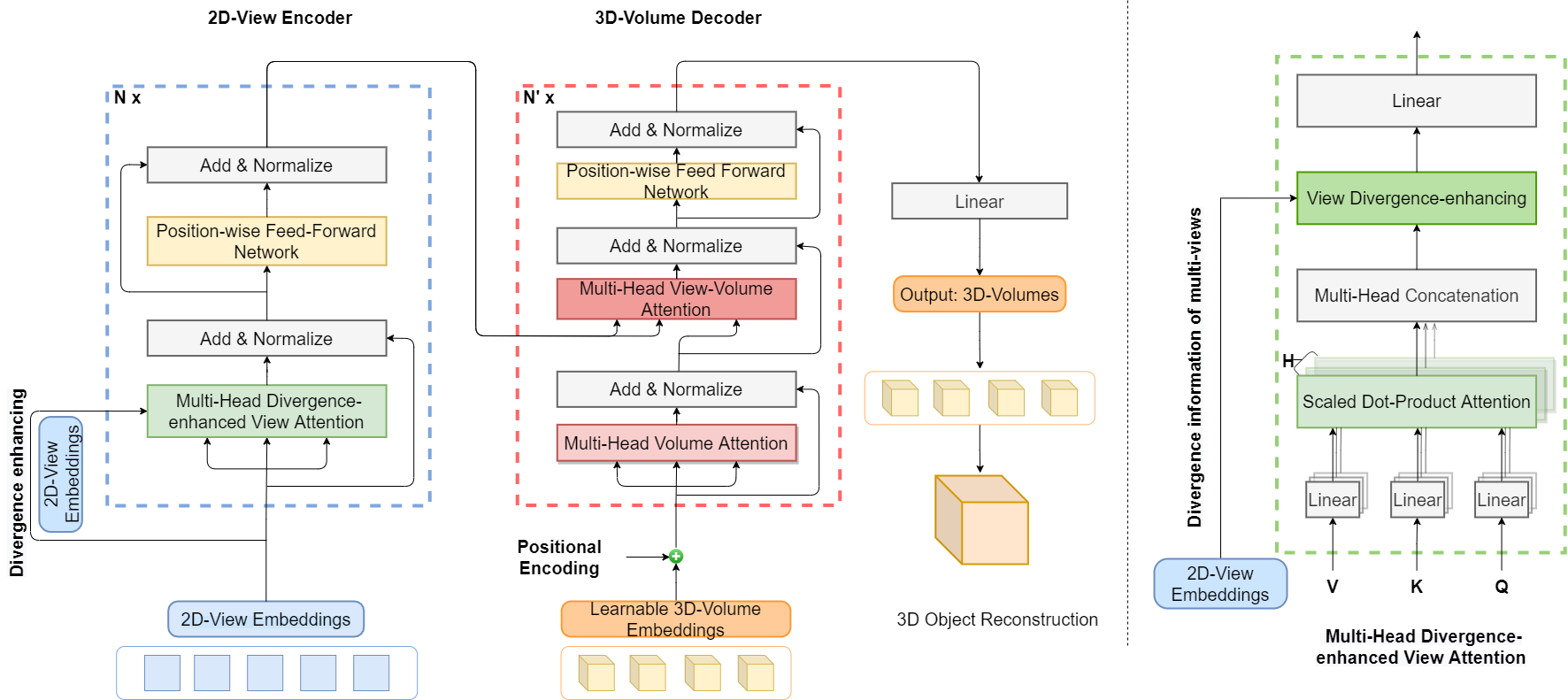

In International Conference on Computer Vision (ICCV Oral), Oct 2021A novel Transformer-based framework for multi-view 3D reconstruction that effectively captures long-range dependencies across views, leading to improved reconstruction quality and robustness.

In International Conference on Computer Vision (ICCV Oral), Oct 2021A novel Transformer-based framework for multi-view 3D reconstruction that effectively captures long-range dependencies across views, leading to improved reconstruction quality and robustness. -

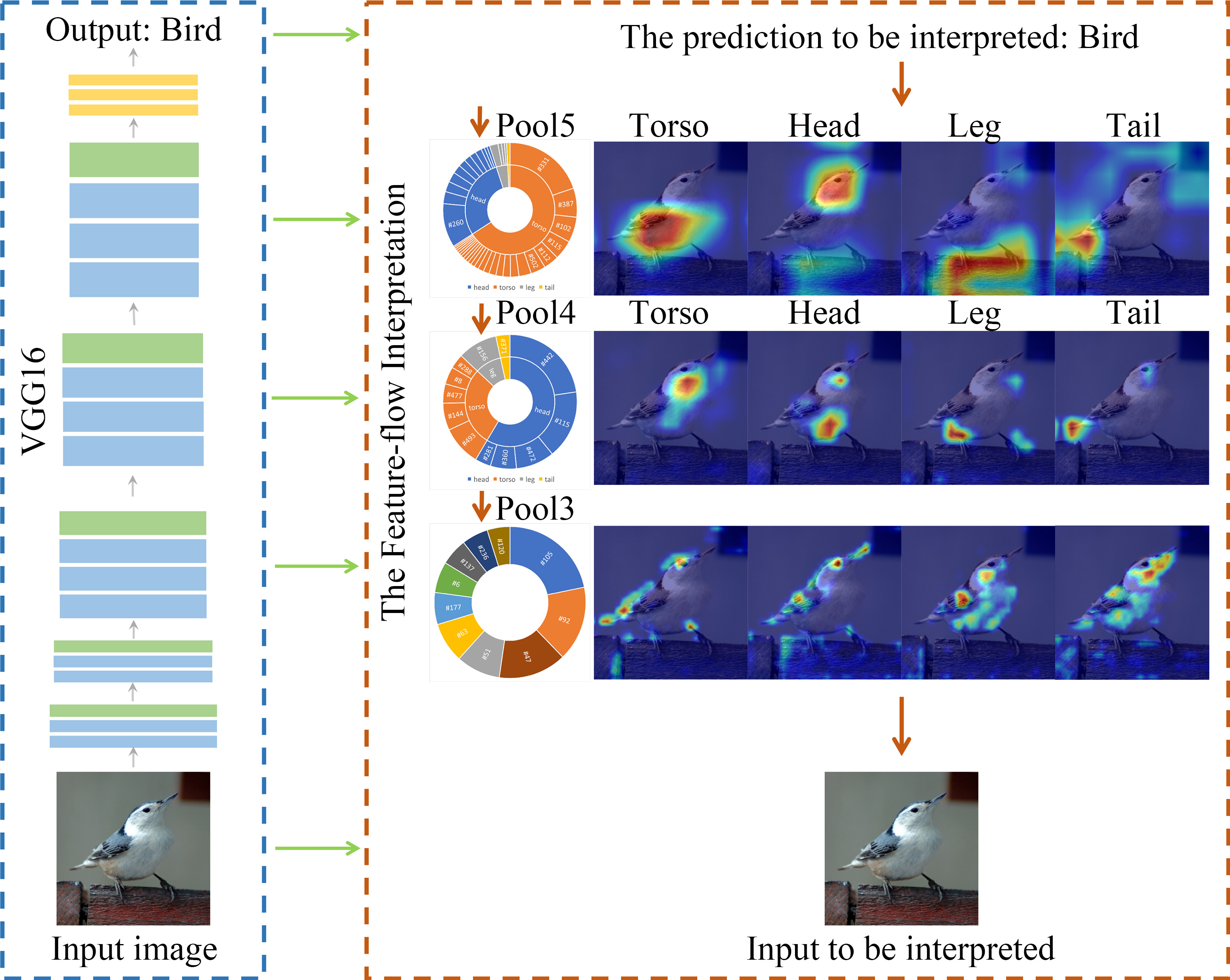

IEEE Transactions on Image Processing, 2021An interpretation scheme that explains CNN decision-making by backwardly decomposing high-level semantic concepts into a hierarchy of lower-level visual concepts across different network layers, mimicking the bottom-up hierarchical logic of human visual recognition.

IEEE Transactions on Image Processing, 2021An interpretation scheme that explains CNN decision-making by backwardly decomposing high-level semantic concepts into a hierarchy of lower-level visual concepts across different network layers, mimicking the bottom-up hierarchical logic of human visual recognition. -

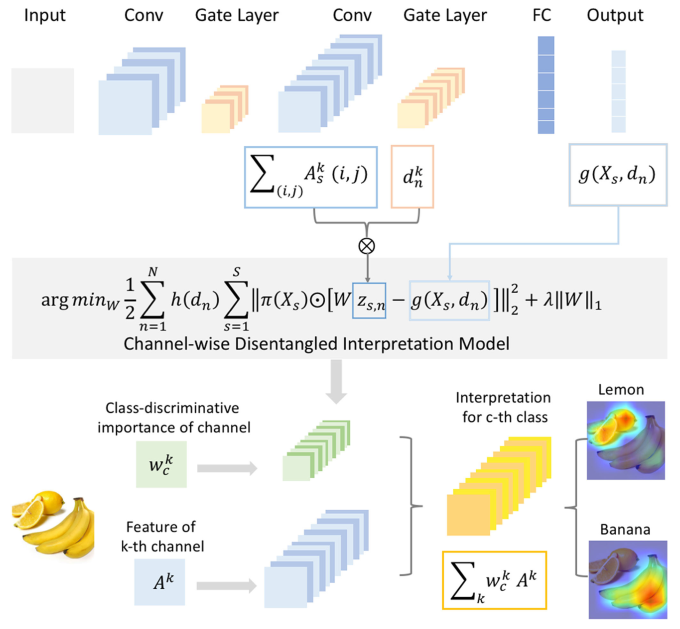

IEEE Transactions on Neural Networks and Learning Systems, 2020A channel-wise disentangled interpretation method that identifies the most influential channels in a CNN for a given prediction and disentangles their contributions to different visual concepts, providing a more detailed and interpretable explanation of the model’s decision-making process.

IEEE Transactions on Neural Networks and Learning Systems, 2020A channel-wise disentangled interpretation method that identifies the most influential channels in a CNN for a given prediction and disentangles their contributions to different visual concepts, providing a more detailed and interpretable explanation of the model’s decision-making process. -

IEEE Transactions on Multimedia, 2020A feature-flow interpretation method that learns the flow of information through a CNN by tracking the activation patterns of features across layers, revealing how different features contribute to the final prediction.

IEEE Transactions on Multimedia, 2020A feature-flow interpretation method that learns the flow of information through a CNN by tracking the activation patterns of features across layers, revealing how different features contribute to the final prediction. -

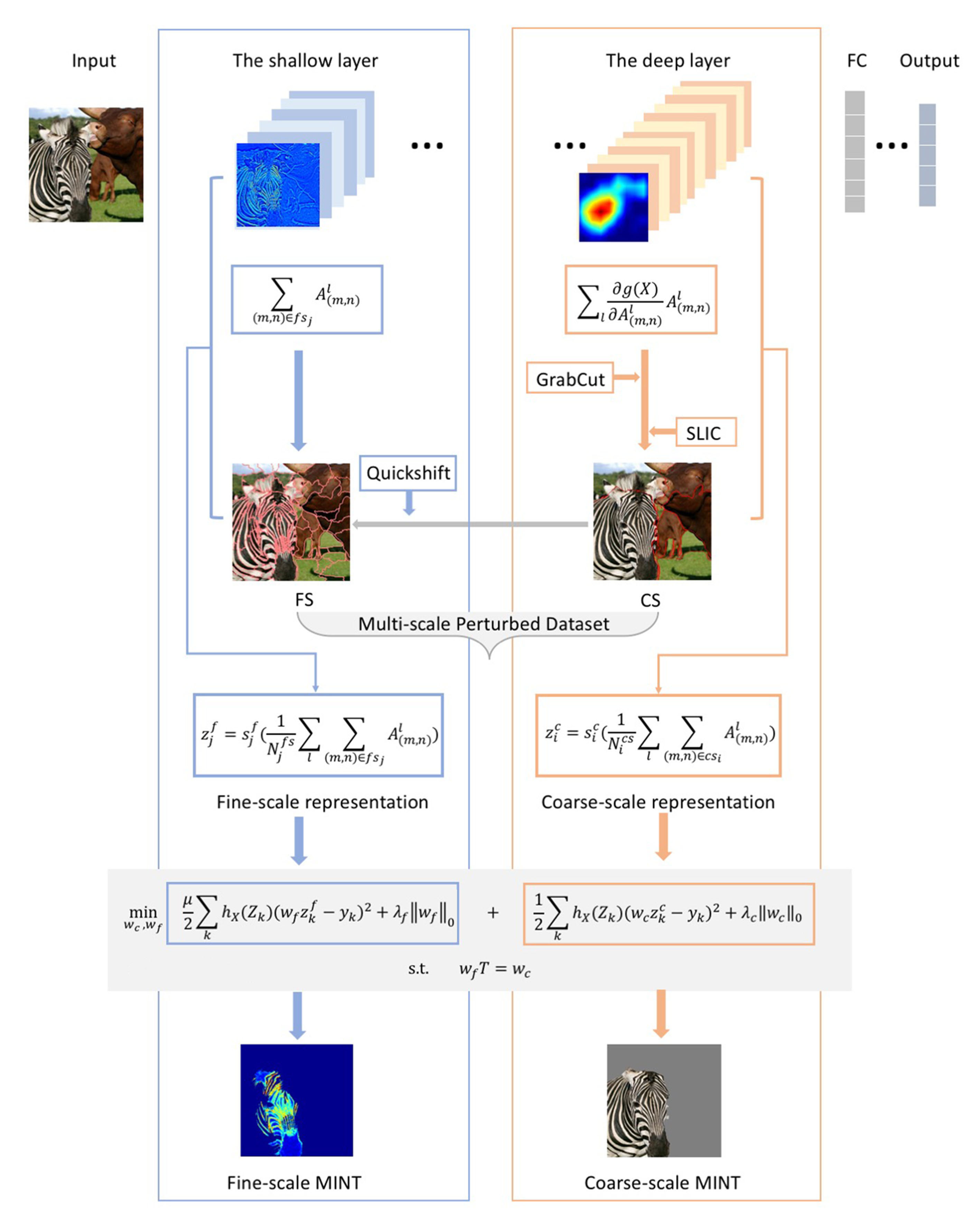

IEEE Transactions on Multimedia, 2019A multi-scale interpretation model that provides hierarchical explanations of CNN predictions by interpreting the model’s decision-making process at multiple levels of abstraction.

IEEE Transactions on Multimedia, 2019A multi-scale interpretation model that provides hierarchical explanations of CNN predictions by interpreting the model’s decision-making process at multiple levels of abstraction. -

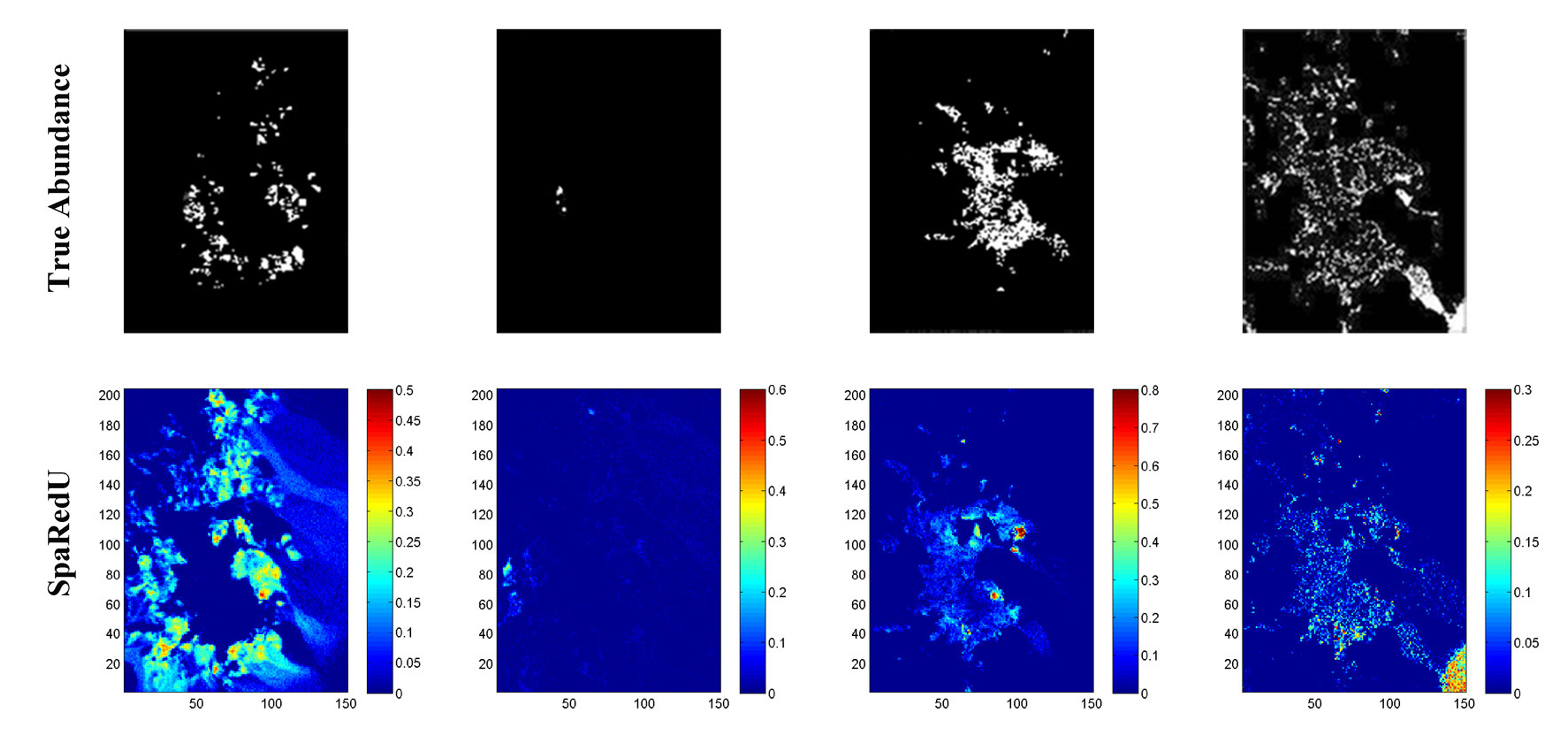

IEEE Transactions on Geoscience and Remote Sensing, 2018A robust sparse unmixing method for hyperspectral imagery that incorporates spatial information and a novel regularization term to improve the accuracy and robustness of unmixing results.

IEEE Transactions on Geoscience and Remote Sensing, 2018A robust sparse unmixing method for hyperspectral imagery that incorporates spatial information and a novel regularization term to improve the accuracy and robustness of unmixing results.